NEO-JI AGENTIC AI.

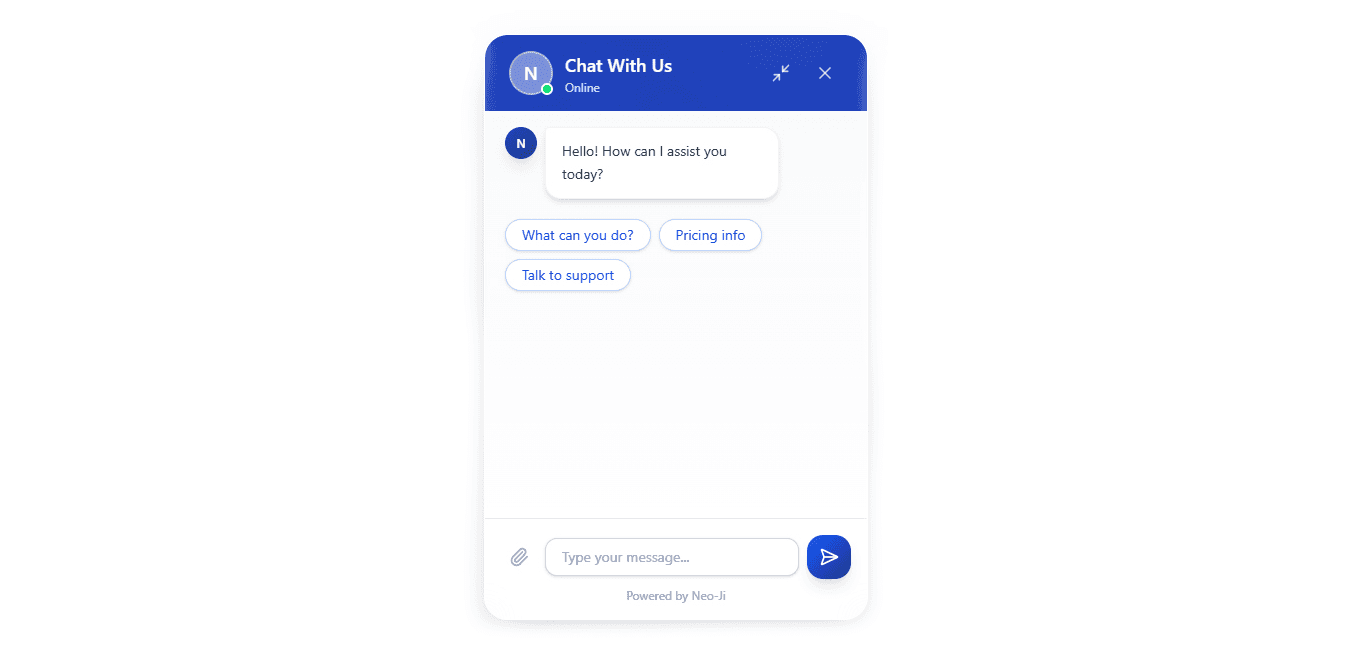

A drop-in conversational AI widget for any website — voice, text, and real-time chat in one script tag.

THE

ARCHITECTURE

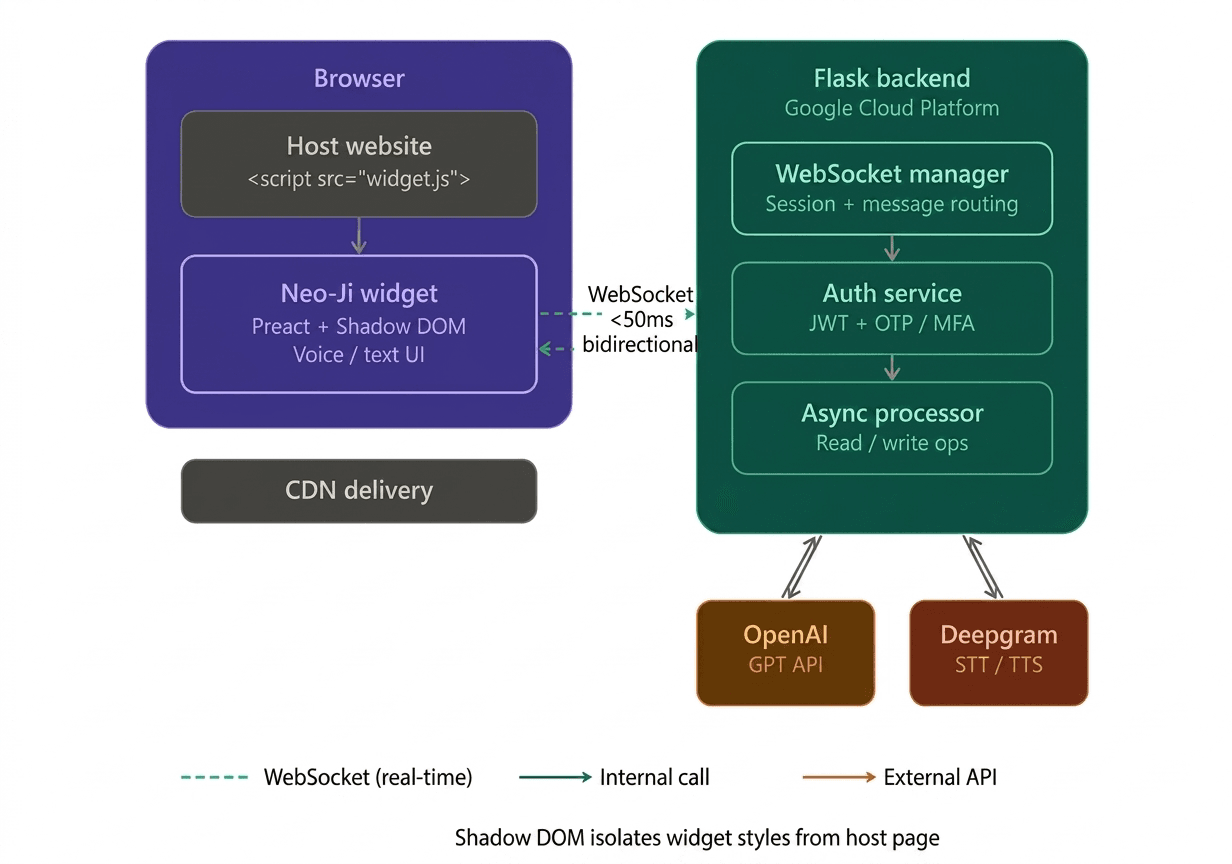

Neo-Ji is built as a two-layer system: a lightweight embeddable frontend widget and a Python Flask backend that orchestrates AI, voice, and session services.

The frontend widget is built with Preact and bundled for CDN distribution. It uses Shadow DOM to fully isolate its styles and scripts from the host page, ensuring zero CSS or JS conflicts regardless of the site it is embedded on. Website owners integrate it via a single script tag with no build step or framework dependency required.

The backend is a Flask API server deployed on Google Cloud Platform. It manages WebSocket connections for real-time bidirectional messaging, routes conversation turns to the OpenAI GPT API, and handles Deepgram speech-to-text and text-to-speech streams for voice interaction.

Async processing was implemented across read/write operations to handle concurrent users without bottlenecks, resulting in a 35% improvement in API throughput.

Preact over React: Preact was chosen for the widget layer due to its significantly smaller bundle size (~3kb vs ~45kb), which is critical for a CDN-distributed embed that must load fast on any third-party site.

Shadow DOM for style isolation: Rather than relying on CSS namespacing or scoped classes, Shadow DOM provides hard browser-level encapsulation. This was the only reliable way to guarantee the widget renders correctly across arbitrary host pages with unknown stylesheets.

WebSockets over HTTP polling: Polling introduced visible lag in conversational turns. A persistent WebSocket connection eliminated this, achieving under 50ms message latency and making the chat feel instant.

Deepgram for voice: Deepgram was selected over alternatives for its streaming STT accuracy and low-latency TTS, enabling a full duplex voice experience directly in the browser widget without requiring any native app or plugin.

Key

Technical Decisions

INTERFACE GALLERY

TECHNICAL

CHALLENGES

01. Style isolation across unknown host pages

Embedding a widget on arbitrary third-party websites means encountering unpredictable CSS environments. Standard scoped class approaches were unreliable. Shadow DOM was adopted to enforce hard browser-level style encapsulation, preventing leakage in both directions and ensuring the widget always renders correctly regardless of the host page's stylesheet.

02. Achieving real-time latency at scale

Early prototypes used HTTP polling for message delivery, which introduced noticeable lag between user input and AI response. Switching to a persistent WebSocket architecture eliminated the overhead of repeated handshakes, reducing round-trip message latency to under 50ms. Async processing was also applied across Flask endpoints to prevent I/O blocking under concurrent sessions, improving overall API throughput by 35%.

IMPACT & METRICS